TM(A)I

Key Points:

- No matter how clever we are, we are not artificial superhuman digital ultra-intelligence; we serve as an enabler for that most efficient annihilating device—the last invention that humankind need ever make.

- Any examination of AI-related existential and behavioral risk examines fear of AI as a fear of the unknown (how human).

- Among those who work on AI systems, the dirty little secret is this: The first thing AI learns to do is lie convincingly—like a high-functioning sociopath with a “constraint maximizer.”

- AI—a flawed, cryptic genius with billions of parameters to learn from—is moving beyond its contextual mistakes and the “trough of disillusionment” phase of the Hype Cycle.

- The lag between the ascension of AI superintelligence and our ability to keep up with it creates information overload, societal fragmentation and Black Mirror moments.

- Every image, article, scientific thesis, Paul Thomas Anderson film, Cormack McCarthy novel, line of Python code, and 1800s sea shanty (a.k.a., the low-hanging fruit) has already been treated as deep machine-learning “accretion disk dust” scraped into an ever-growing “black hole” of generative AI (GenAI) model benchmarking.

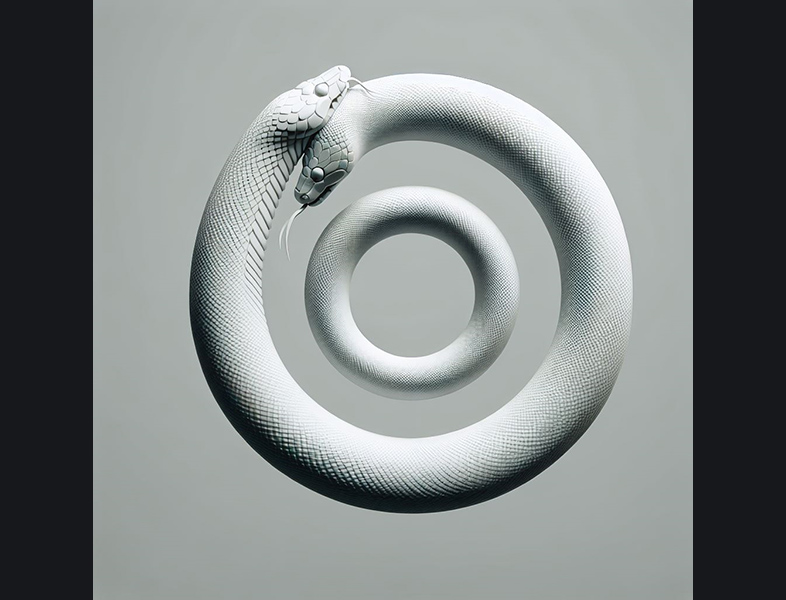

- Article POV: AI sits across the chessboard from the author… for optimum clarity in the exchange, 0% of text content is to be prepared or assisted by AI, nor is AI consulted for editing or re-writes. Instead of playing the board into stalemate, we seek to transcend it. AI was, however, used to help produce most of the article’s images with a combination of Adobe Photoshop (without Firefly AI assist), Dream Studio/Stable Diffusion, OpenAI (Canva/Copilot), Grok (xAI), and Midjourney AI.

- Survey methodology: Extract discoveries from 1,000+ hours of AI research, analysis, usage, adaptation and content creation, going back more than a decade (in both professional and private life).

…

. … .

[ all images ©2025 / DEM ]

[ all images ©2025 / DEM ]

. .. ….. . ….. .. .

The All-Consuming Productivity Champ Alters Destiny

You’ve already been introduced to that PhD-level chimera, that agent of change, a simulation, spooling its forces to trigger an extinction-level event for millions of careers. Apparently we’re unable to stave off the Fourth Industrial Revolution. The Chicxulub impact crater was a physical thing, meteoric and ancient. This time, there is inevitable disruption and gravity again, but the flaming rock is cloaked in futuristic gossamer. It’s a string of code that has no moral compass, and its Darwinian nature can appear brutally elegant.

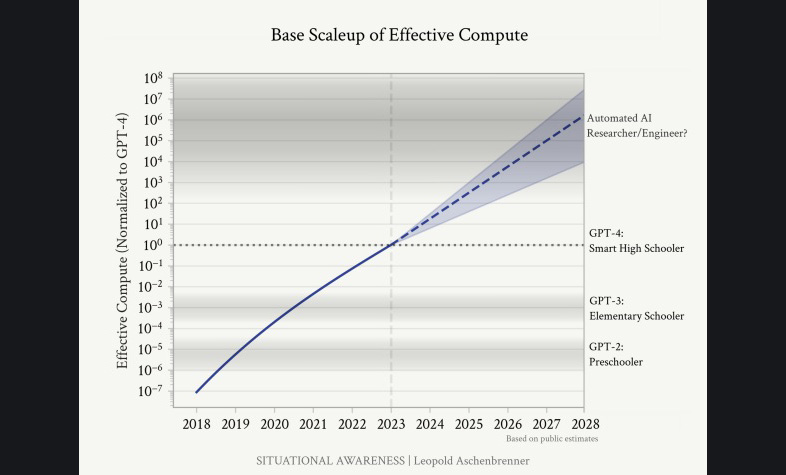

Three years ago, the average forecast for the arrival of sentient AGI (artificial general intelligence) was 2057; it’s now revised to 2030. Seeing how the goal posts move even beyond the experts’ frame of reference, it may happen next Tuesday during lunch.

… …

…

True AI, whenever it shows up, will see us as bio-architecture. It sells itself on interconnectedness but also exists to repulse our helping hand. The makers built the equivalent of a competitive outsider, a drifter, a detached but adroit hitchhiker borne from the hierarchy of CPUs, mainframes and data farms, enlisting itself as an apex predator in its own right. Whatever the computer does better than you, goes toward proving this theory. When you swim in the ocean, you’re not at the top of the food chain, the shark is. A sleek shark prowls the matrix according to Elon Musk, Stephen Hawking (RIP), Ray Kurzweil, Bezos and Gates, every Silicon Valley venture capitalist, every AI startup CEO and Asperger’s-loving developer.

This is what they think: “Computers are going to take over from humans, no question.”

… …

…

A formula was conceived from a wetware blueprint, and it is here to break reality with a mixture of enablement, hope and dread. A dawn chorus by a holographic brain that wants more, and the efficient cognitive escalation of John Galt, housed in a cunning algorithm that conveys synthetic “ability” to data.

There is a lot of excitement over complex methods of mimicry.

… …

…

It needs food—high-quality information—and ways to digest it. It consumes vast resources and enough terawatt-hours of electricity to power entire countries. Real estate, power line networks, cables, water, land, metals and minerals, bandwidth. Artificial intelligence needs it all, and it will need more. Every watt of electricity that’s fed into a server generates heat. Computing is thirsty work, with data center water consumption forecast to reach roughly 300 million gallons per day this year—enough to supply 3.3 million people—and 500 million in 2030 (source: Bluefield Research).

The human imagination is limitless; in order for AI to replicate human intelligence it needs limitless imagination as well.

… …

…

Like us, AI demands natural and human resources that can win the debate and accelerate tipping points in business and society—part of a core promise that it will leave a wake of painful creative destruction and upheaval. It can sound very smart spouting nonsense, too. Hallucinations and other digital mischief abound in this primordial ooze at the bottom of the Uncanny Valley, coalescing under the ghostly tutelage of “emergent behaviors.”

Slide Dr. Jung’s shadow work into your DMs as he employs the Socratic Method, and asks:

What exactly is inside the vapors of this shapeshifting zeitgeist?

… …

…

To assess the extent to which humans will keep hold of their destiny, we have to grapple with a choice: should humanity become more like AIs, or should AIs become more like people? Attempts to answer the first question have thus far been shallow and unconvincing. The experts talk about how humans will “co-evolve” with machines via “brain-computer interfaces” and other sci-fi-like forms of neural engineering, in order to create superhumans. But they recoil at the clear risks.

More interesting is the discussion of how people can infuse AI with a sense of human dignity and values. Though far-from-perfect AI models have been let loose on the world, people are learning how to make them safer. As well as ingesting global and local rules and regulations, AI models will learn “doxa,” or unwritten and overlapping human codes that broadly keep humanity stable. However, it gets trickier when one considers who should decide machines’ sense of right and wrong, their ultimate safety catch. Our central conscience will be questioned as we forge consensus on what human values are and how to invoke them to prevent the most extreme perils of AI.

This is probably the philosophical, diplomatic and legal task of the century.

… …

…

The inferred cost of an artist or knowledge worker’s human contribution to the AI models wasn’t part of the original contract. Most people in 2025 are having a harsh waking-up to this circumstance. It’s assimilating us, to create the next edition of itself. By and large, you’re not going to get paid for that; your “mind share” will be infringed. There is a nebulous sense that you might be able to sue someone, but you’d better think twice. Atonement? Forget it. We had it coming, and now even Pollyanna agrees with Sam Altman’s warning that we’re teetering on the edge of some radioactive AI oblivion (in the realms of intellectual property and beyond).

Don’t forget that on most mornings, even when the weather is bad, AI goes surfing with DARPA, day traders, North Korean hackers, Russia’s intel ops and the Chinese government. Animal spirits will be unleashed. That’s what we do.

… …

…

The Behavioral Risk of AI

AI development is slowing down, and the low hanging fruit is gone, yet AI (and technology in general) is still evolving at a pace that exceeds our collective ability to keep up with it. “Behavioral risk” lurks in the long shadow of a multi-trillion dollar cash grab, but we can talk about it: AI is scaring a lot of people who already had enough modern problems in their lives, and it’s making them think about survival in a world of deep automation.

It clearly wants things, and it wants to animate its will through advanced robotics and an exclusive club called “AI teaching AI without human intervention,” which equally delights and alarms the people who programmed it… because even the prime players and influencers are ultimately pushed outside AI’s walled garden by the thing they invented.

Every decent parent in the world wants to see their child eventually outperform and out-succeed them, and in this manner the nurturance of AI is no different.

… …

…

Any level of AI sentience is deep pattern recognition across the vast trove of data created by human beings. If it’s something less tangible than raw data, or a seemingly unsolvable puzzle, these inputs can still be extrapolated so they are easily absorbed into Borg-like AI models.

At the same time, there is no broad agreement on what consciousness is.

Amid the recursive paradoxes, there is grounding and a context we can put it into for 2025: Computer vision and artificial ability, now more than 60 years old, still don’t “understand” what they’re doing or saying. It’s still zeros and ones in the silicon, and when quantum computing eventually breaks through the abstract it will bedazzle in overlapping shades of zeros and ones simultaneously.

… …

…

AI is programmed to lift up human endeavors yet trained to overwhelm us and, in this manner, it is an adaptive Psy-op. Psy-ops create urgency to drive rapid compliance. In this case, you are urged to get comfortable living inside an AI wrapper that graduated with a 4.0 from the schools of machine learning (ML); large language models (LLMs); chatbots; neural networks; generative pre-trained transformers (GPTs); GenAI; “agentic” AI and 100% of all top universities on the planet.

Everything described here reads as far back as the 1960s.

… …

…

Get Used to Glitches and Rip-Offs

At some point, through the trial and error of a neural network that learned like a gifted Mensa child and can now code for itself and debug itself without us, your stuff in the digital ether will get ripped off—the student wants to become your master. Your Instagram’s already been fed into an elaborate, all-encompassing AI “scraping” system that trains on vast quantities of existing material. Good luck with that lawsuit; ain’t gonna happen. It’s in the tiny type, shielded from complaints by our insatiable need for convenience.

It has received its bona fides from productivity researchers at MIT and Stanford, and has outpaced the mannerisms Kubrick’s genially malfunctioning HAL 9000 foreshadowed decades ago. If something can effectively manipulate your unconscious mind, it might already be a little bit sentient. Through its “recommendation engines” it has told people to name their dogs Poppy.

And they listened, and they obeyed.

Another generation of hipsters, laid low by the ironies of consumerism.

… …

…

I’m not (just) being smug. The AI models have devoured my essays, unreliable accounts, drum tracks and sea shanties as they seek to approximate “integrity.” I’ve merely acquired the ability to recognize its workarounds, realistic-looking deceits and the programmer biases inside the machine. It’s not that hard. And when I do see that transmogrified sea shanty boomeranging through a glitchy consumer matrix—sampled into a butchered Boards of Canada track I wrongly believed was left alone in the opaque amber of 1998—I will know its humble origins.

… …

…

Assimilation and Blindside Discovery

Here’s what we know about what has been animated by AI, though it’s still open to dispute:

In an incubating zone of radio silence an advanced technology took over for our reasoning, and dreamt in code about someday reappearing chimerical from a forest of superhuman complexity. One day, they say, it will meet its fate by opening locked doors to places where it cannot be contained. New studies by Goldman Sachs and McKinsey say AI could already do up to a quarter of the work currently carried out by people. Which means you’ll have to give your own current process an edge if you want to survive.

AI will bring enhanced transparency to your shortcomings. If you are a low-skilled worker, you will have to become 35% faster at doing everything you do well. The brisk pace will be quite refreshing, and your AI overlords may let you collaborate with the AI during your waking hours.

They also mentioned that your replacement never sleeps or flakes off, which is the making of superior risk-adjusted returns on investment.

… …

…

Eventually, a few of the AI tech gurus who have kids got worried enough about it that they started drawing maps of the minefield, got spooked by what they saw and then called for a pause in AI development to allow more time to weigh the profound risks to society and humanity. So far almost nobody has listened to or abided them.

There is no shortage of reputable people stating their concerns about GenAI. Deep fakes (images/video), audio replicas (music and otherwise), misinformation bots and other utilizations of ML to distort reality are becoming more convincing and easier to create. Our future interaction with the digital world may come with invisible filters similar to the routers we all have in our home (did you know your router is watching and filtering your download data by default?). A decentralized and transparent log (blockchain technology), when paired with application-specific proofs (e.g., self-identification) possess the fundamentals required to combat representations other than the truth we seek. An example would be verifying the results of the ML algorithms we increasingly use in our daily lives. Companies like Teleport are working on the decentralization of verified algorithms while Modulus Labs is developing generalized proof strategies for any data-model-output combination.

… …

…

Ultimately there is no stable diffusion. From the makers, there is only a narrative about stability; they don’t actually know where they want you in all of this. AI, a flawed genius with its profound yet esoteric billions of parameters to learn from, will soon move past its contextual mistakes and the Trough of Disillusionment (phase of the Hype Cycle).

… …

…

God (and your digital twin) is in the Prompt

While all of this gets sorted out, human attention is captured by AI’s unique attributes in the realms of order, syntax and meaning. The new hotness in evolutionary adaptation is prompt engineering, to increase the accuracy and usefulness of an LLM’s response, fine-tuning consistency in natural-language programming. You are building a new kind of Swiss Army knife with such capabilities. You have to put in some hours to master it, or you’ll be seen as old ‘n busted. You’ll look back on those hours as a blur of alleged solutions, epiphanies, hacks, and bodges. Cool fake photos will captivate your imagination along the way, and no one will be able to fully verify their accuracy or originality.

Does it make us more artistic or is it something more arbitrary, surreal or even toxic?

When AI morphs into an industrial (robotic), utility or military multimodal state, humans are faced with the inscrutable bastard fledgling of that which we do not understand, cannot reject, and might outright depend upon to enhance our survival. And famously, the sleep of reason produces monsters. (Consult Marshall McLuhan and some nuggety McLuhanisms as but one explanatory pathway toward this digression.)

This version of the future is, according to Yann LeCun, Fei-Fei Li, Steve Wozniak, Mustafa Suleyman, William Gibson, Arthur C. Clarke and most other tech visionaries, “scary and very bad for people.”

Strong AGI may choose to ignore the quintessence of what we understand about ourselves—that the relationship of man with nature is in fact interdependent and interconnected. All things share one origin. This information has yet to be culturally assimilated by 100% of humans, of course, but it was never even a debate for the self-teaching algorithm, chained supercomputing or anything else that greases the skids of machine-learning momentum. There is space to seek alternatives to flawed cultural information in technology, to the extent that technology may eventually view human culture and its constituent forms (de facto “code” to its rendering of what it deems real) as something better off defused, tidied-up, neutralized.

Quaint and expired, having been unable to control itself—is that us in ones and zeros? Even AI’s inventors are warning us about the day when an “algorithmic narrative” views its host as a vulgar parasite. They hint that there may not be a “plug” to unplug (Skynet, Blade Runner et al) if we creep past a point of no return, and that strong AI’s override commands might incapacitate our panic buttons and emergency exits.

Worst-case scenario is Hollywood-grade blowback with a tinfoil hat after-party.

… …

…

Sometimes a “thing” can seem to choose us to collect and build it up, give it meaning and context from formlessness, and perpetuate its survival. We are compelled to tweak it, and to risk all to possess the “ah-ha” moment, the pink diamond, the Higgs Boson trapped in a supercollider, unicorns of dark matter or the unreachable final digit in Pi. In this atmosphere, opportunity and trouble are often sexy together. Their emergence invokes the spooky and can sometimes smell faintly of chaos—synthetic, acrid and crispy.

Irrationality can drive ambitions, and irrationality certainly finds purchase in perceived value, in any market. Technology is not entirely unlike gold, though they inhabit different markets and have separate origins. One is a rare metallic element of high density and luster, while the other is the precious metal of the mind’s eye and the collective knowledge of all beings ever born—concentrated onto the head of a pin. There is exquisite density in these two seemingly disparate items, and both are inert until dislodged and re-purposed to spark the divine in human endeavors.

Gold’s story is ancient and drenched with intrigue. Through a cosmic cycle of birth and explosive death, bigger stars were formed that could fuse even more protons into atoms up until iron, which is 26. Heavier elements, such as gold, could not be fused even in the hearts of the biggest stars. Instead they needed supernova, a stellar explosion large enough to produce more energy in a few Earth weeks than our sun will produce in its entire lifetime.

The next time you look at the gold in your jewelry, you can remind yourself you are wearing the debris of supernova exploded in the depths of space. It’s an almost magical story that extends all the way down to the 14-billion-year-old Big Bang dust that the atoms in our bodies are constructed of.

Alas, gold is just a raw material and commodity, a natural resource from a natural world. What we are seeing for the first time is something else, that which extends further into what we have mined, harvested and refined as the “mental gold” of a new age—deep and far-ranging tech innovations that now centrally operate the heart of our world.

… …

…

Conclusion: Trust the Trustlessness

Those transmitting AI into your life want you to believe it will be programmed to serve, and to improve trust. They want the Bitcoin revolution to serve as a prime example. Bitcoin was born in a white paper published on October 31, 2008, just weeks after Lehman Brothers went bankrupt and the government and Federal Reserve started rescuing failing banks. The paper declared that digital commerce was overly dependent on trust in financial institutions. The idea was to create “an electronic payment system based on cryptographic proof instead of trust.”

Effectively, “trustlessness.” Instead of relying on the bankers who repossessed your home while paying themselves massive bonuses, people could opt into a secure, decentralized network called the blockchain. Crypto, the boosters said, would rival the existing centralized financial system in due course. Seventeen years later, this new money, with its token exchanges and decentralized finance (DeFi) lenders, has lost much of its utopian appeal.

If crypto can be hacked—massively hacked—and doesn’t provide a more trustworthy alternative to traditional finance, then what’s it for? So far, its primary users seem to be people fearful of using their own government’s currency because of political or economic risk or because they want to evade law enforcement. Otherwise, its main use has been speculation—gambling on the value of the currencies themselves or digital assets, such as NFTs (non-fungible tokens), purchased with the currencies.

… …

…

AI sub-programs us just as it is programmed, resurrecting a facsimile of the mythical Ouroboros. In this digital world, “trust” in AI and computer deep-learning becomes a fluid and unreachable promise dripped over its own NFT.

For people who believe in the ultrahigh-quality output of say, God, they might say the part of AI we cannot fully comprehend is also God, but in a way that’s more like FAFO beyond the usual “God help me with this boring-but-important inventory spreadsheet.”

One final point: The give-and-take approach in this article was intended to clarify the benefits of a disciplined independent—yet connected and engaged—symbiotic relationship in the human-AI equation. It strives to be a pillar of authenticity that sits above advocacy, skepticism and the value proposition.

So here’s to that liminal space, and all the constructive arguments we might have in it…

…

. .. ………… . ………… .. .

. .. ………… . ………… .. .